4 Top Security Automation Use Cases

With Gartner recently declaring that SOAR (security orchestration, automation, and response) is being phased out in favor of generative AI-based solutions, this article will explore in detail four key security automation use cases.

1. Enriching Indicators of Compromise (IoCs)

Indicators of compromise (IoCs), such as suspicious IP addresses, domains, and file hashes, are vital in identifying and responding to security incidents.

Manually gathering information about these IoCs from various sources can be labor-intensive and slow down the response process.

Automating the enrichment of IoCs can greatly enhance the efficiency of your security operations.

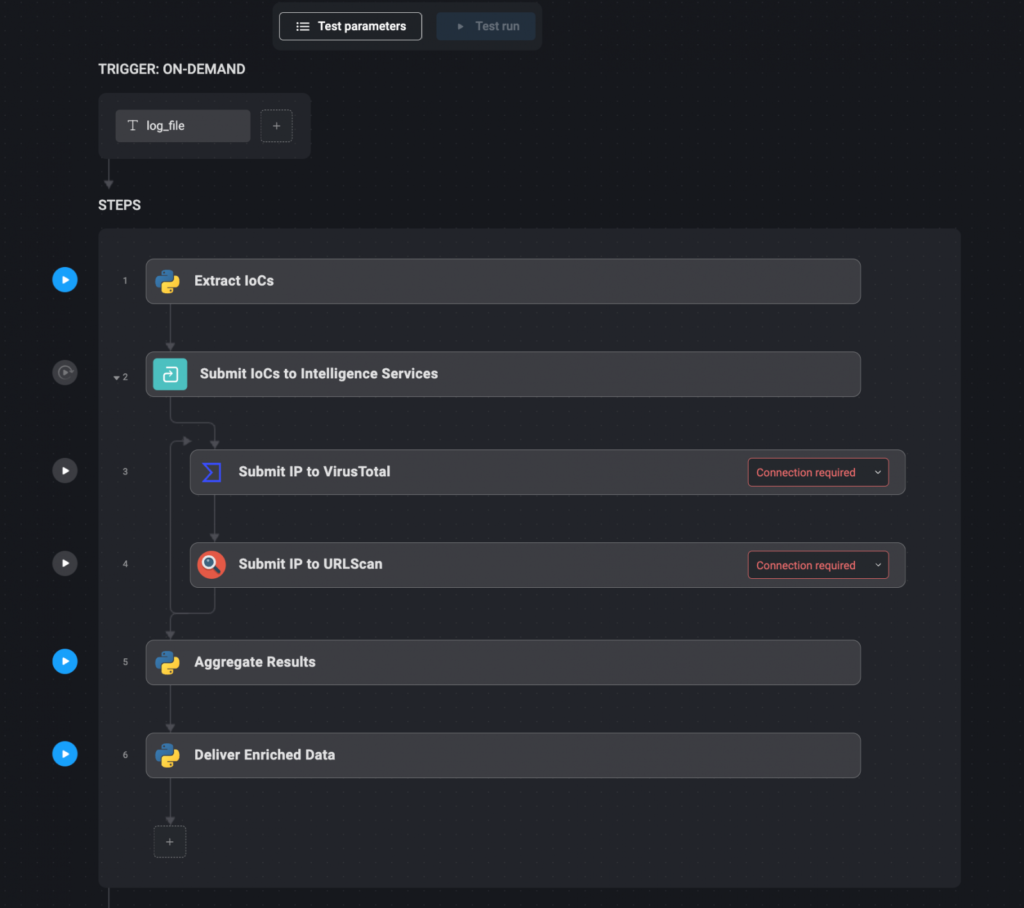

Automation workflow:

- Extract IoCs: Automatically extract relevant IoCs from security logs or alerts using text parsing tools or other automated methods.

- Submit IoCs to Intelligence Services: Once extracted, the IoCs are automatically submitted to various threat intelligence services, such as VirusTotal, URLScan, and AlienVault, via their APIs. These services can provide additional context, such as whether the IP address has been associated with known threats or if the domain has been flagged for suspicious activity.

- Aggregate Results: The results from these intelligence services are aggregated into a single, comprehensive report. This step ensures that all relevant information is available in one place, making it easier for security analysts to assess the threat.

- Deliver Enriched Data: The enriched IoC data is then delivered through communication channels like Slack, or directly added to the relevant incident ticket within the security management system. This ensures that all necessary information is immediately accessible to those who need it.

2. Monitoring Your External Attack Surface

The external attack surface of an organization includes all the external-facing assets that could potentially be exploited by attackers.

These assets include domains, IP addresses, subdomains, exposed services, and more.

Regular monitoring of these assets is important for identifying and mitigating potential vulnerabilities before they are exploited.

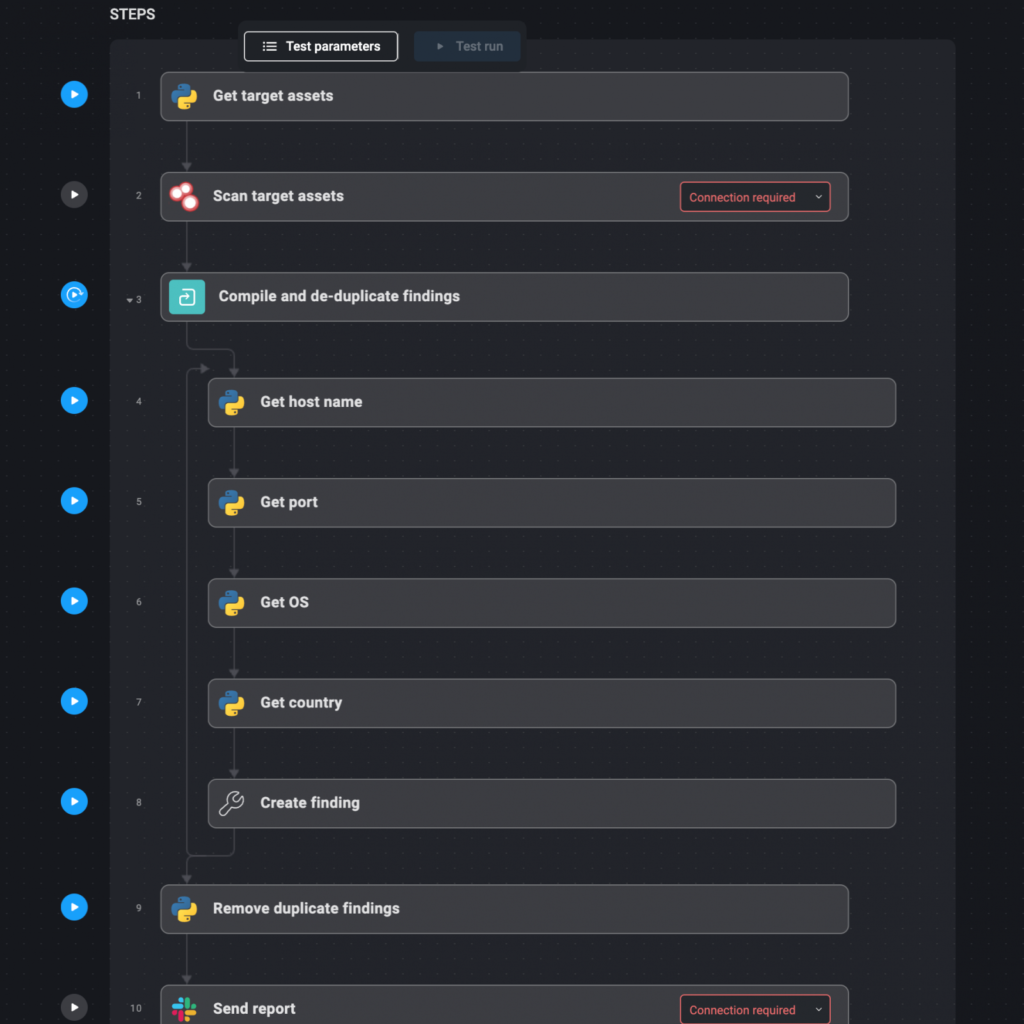

Automation workflow:

- Define Target Assets: Start by defining the domains and IP addresses that make up your external attack surface. These should be documented in a file that the automation system can reference.

- Automated Reconnaissance: Use tools like Shodan to scan these assets on a weekly or monthly basis. Shodan can help identify open ports, exposed services, and other vulnerabilities.

- Compile and De-duplicate Findings: The results from these scans are automatically compiled into a report. Any duplicate findings are removed to ensure that the report is concise and actionable.

- Deliver Weekly Reports: The final report is delivered via email, Slack, or another preferred communication channel. This report highlights new or changed assets, potential vulnerabilities, and any redundant applications that may pose a risk.

3. Scanning for Web Application Vulnerabilities

Web applications are frequent targets for attackers, making regular vulnerability scans useful for maintaining security.

Tools like OWASP ZAP and Burp Suite automate the process of identifying common vulnerabilities, including outdated software and misconfiguration.

These scans also detect input validation vulnerabilities, helping to secure web applications.

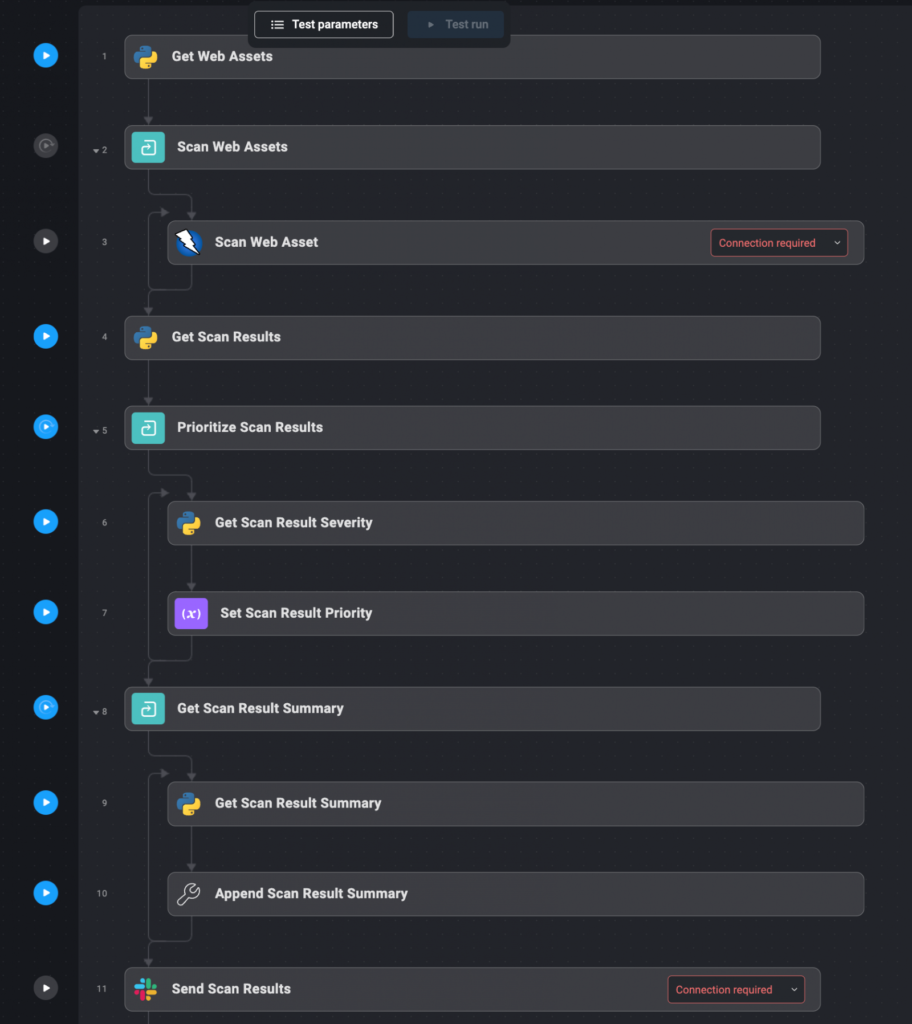

Automation workflow:

- Define Web Assets: Begin by listing all the domains and IP addresses that host your organization’s web applications. These assets should be documented in a file for easy reference by the automation system.

- Automated Vulnerability Scanning: The defined web assets are automatically sent to scanning tools like OWASP ZAP and Burp Suite. These tools perform comprehensive scans to identify vulnerabilities, including those that are commonly exploited by attackers.

- Collect and Prioritize Results: The results from the scans are automatically collected and prioritized based on the severity of the vulnerabilities detected. Critical/severe vulnerabilities are highlighted for immediate action.

- Deliver Results: The prioritized results are delivered to the relevant teams via Slack or as an enriched ticket within the incident management system. This ensures that the right people are notified of the vulnerabilities and can take appropriate action.

4. Monitoring Email Addresses For Stolen Credentials

Monitoring for compromised credentials is an important aspect of an organization’s cybersecurity strategy.

Have I Been Pwned (HIBP) is a widely used service that aggregates data from various breaches to help individuals and organizations determine if their credentials have been compromised

Automating the process of checking HIBP for exposed credentials can help organizations quickly identify and respond to potential security incidents.

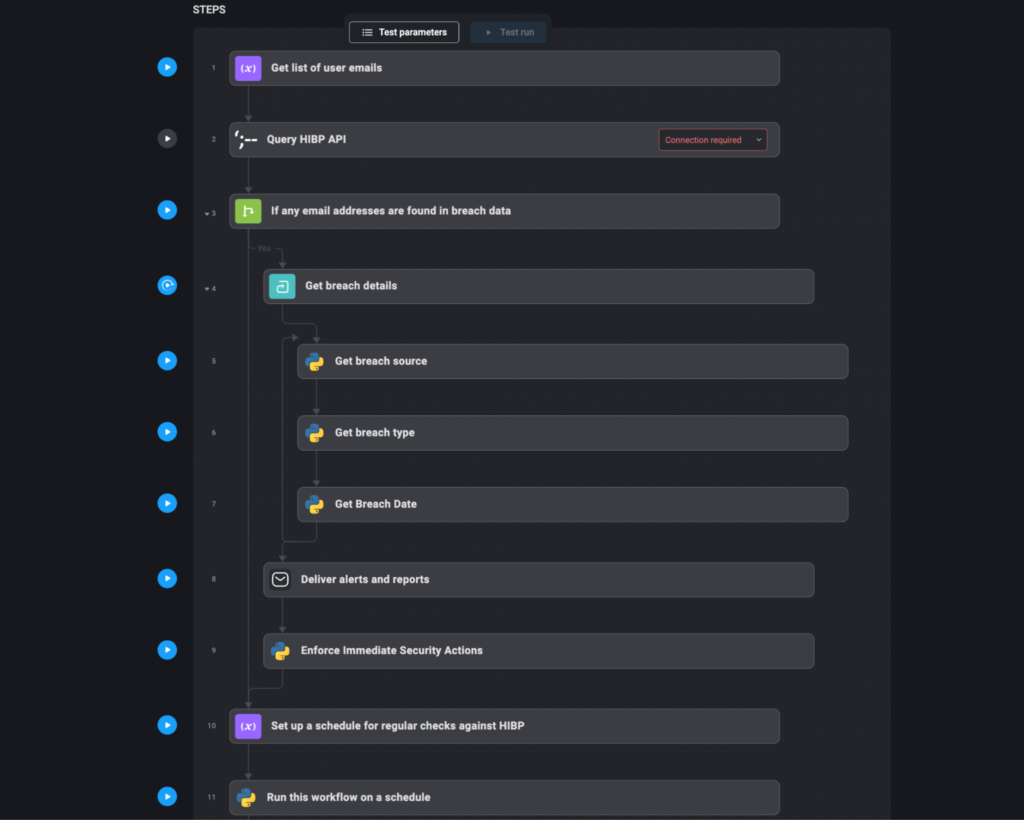

Automation workflow:

- Compile User Emails and Domains: Create a list of user email addresses or domains that need to be monitored. This list should include all relevant user accounts within the organization, especially those with privileged access.

- Query HIBP API: Automatically query the HIBP API with the compiled list of email addresses or domains. This step involves sending requests to HIBP to check if any of the email addresses have appeared in known data breaches.

- Aggregate and Analyze Results: Collect the responses from HIBP. If any email addresses or domains are found in breach data, the details of these breaches (such as the breach source, type of exposed data, and date of the breach) are aggregated and analyzed.

- Deliver Alerts and Reports: If compromised credentials are detected, automatically generate an alert. This alert can be sent via email, Slack, or integrated into the organization’s incident response system as a high-priority ticket. Include detailed information about the breach, such as the affected email addresses, the nature of the exposure, and recommended actions (e.g., forcing password resets).

- Enforce Immediate Security Actions: Based on the severity of the breach, the system can automatically enforce security actions. For example, it might trigger a password reset for affected accounts, notify the users involved, and increase monitoring on accounts that were compromised.

- Regular Scheduled Checks: Set up a schedule for regular checks against HIBP, such as weekly or monthly queries. This ensures that the organization remains aware of any new breaches that might involve their credentials and can respond promptly.

Frequently Asked Questions

Below we will answer some frequently asked questions about the automated workflows above and how they can help in a practical way.

- Don’t third-party services offer automation workflows anyway?

Many services provide APIs that allow for automating parts of the workflow, like fetching data. However, building an end-to-end automated workflow typically requires coding and configurations. Replicating the entire workflow with scripts offers flexibility but is less powerful, as changes could break it. Leveraging available APIs with a centralized automation platform provides a stable, scalable solution. - Can’t we just replicate this whole thing with bash scripts?

Yes, it is possible to write Bash/PowerShell scripts to automate the security tasks mentioned in the article. Scripts offer flexibility that is lacking in manual processes. However, scripts require ongoing maintenance, and any changes could break the workflow. They may also lack advanced features like central management, scheduling, alerting, and reporting, which are offered by dedicated automation platforms like Blink Ops. A proper platform is more reliable and efficient for complex, long-running automation requirements. - How does automating IoC enrichment help?

Automating IoC enrichment speeds up the response process by gathering threat intelligence on indicators like IPs, domains, and file hashes from multiple sources simultaneously via APIs. This provides security teams with a single comprehensive report with the necessary context to assess threats quickly, rather than spending time manually searching different sources. It improves efficiency and situational awareness, enabling informed decisions to be made quickly.

Ref: https://www.bleepingcomputer.com/news/security/4-top-security-automation-use-cases-a-detailed-guide/